Did you find this article helpful for what you want to achieve, learn, or to expand your possibilities? Share your feelings with our editorial team.

Jun 30, 2022

TECH & DESIGNAccelerating automotive development by combining real-life and virtual-environment approaches (part two)

Problem solving of AD/ADAS development in the virtual environments based on video game development technologies

-

AD&ADAS Systems Engineering Div.Takahiro Koguchi

Takahiro Koguchi has built up experience in games and programming since he was a young child, and went on to study quantum mechanics, chaos simulations and other such fields at university. Koguchi joined a video game maker after graduation and worked there for 15 years before switching to DENSO. He has made use of R&D skills in the area of virtual environments from his previous job, to develop driver assistance systems using sophisticated techniques.

Huge amounts of learning data are required to develop AI used in driving support systems. DENSO Corporation is making use of video game development technologies to create learning data used for AI teaching, develop technologies for parking assist systems, and other such purposes. By combining virtual environments and AI technology, the company is actively focusing on next-generation mobility and conducting verification testing for technologies that were once thought impossible.

(This article is the second of a two-part series titled“Accelerating automotive development by combining real-life and virtual-environment approaches.”)

Contents of this article

Creating large quantities of AI learning data by using game development technologies

You mentioned sensing function development as your second usage of the Unreal Engine. How are you using virtual environments in this area?

Takahiro Koguchi: To explain that, let me first describe how advanced driver assistance systems (ADAS) process information.

With ADAS, the system must understand the conditions in the surrounding area via information obtained through cameras, sensors and other devices. In other words, the system must make judgments such as “it’s safe to move” or “this area is not safe to enter” based on such information. To accomplish this, it must be able to figure out what the objects are that appear in camera images.

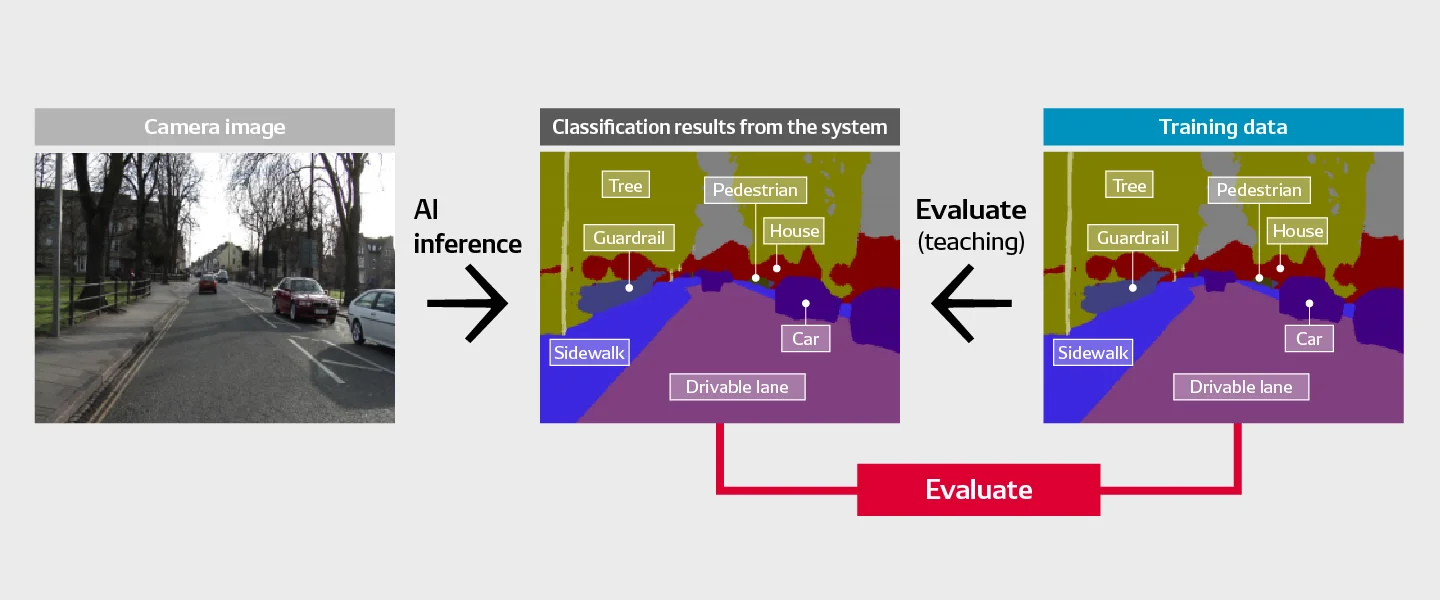

One step in this overall process, known as semantic segmentation, involves analyzing each image pixel by pixel and classifying the objects and terrain as roads, traffic signs, human beings and so forth. For example, on a sunny day with no image blur, the system may be able to detect a white line on the road—provided it is not dirty—based on pixel color intensity. However, it’s difficult to use this simplistic approach when attempting to identify various other objects encountered during a journey, which is why most developers apply the “deep learning” machine learning approach.

In particular, supervised deep learning is a way of teaching the AI in order to develop an AI model based on large quantities of teaching data. This is data that provides the correct answers to problems encountered by the AI. Then, by feeding images into this type of AI model, it’s possible to correctly classify objects encountered in each scene. The challenge with this approach is deciding which types of teaching data to feed into the AI model. With semantic segmentation, we create annotated teaching data by tagging pixels with their proper category.

This must be done for massive quantities of data, ranging from several thousand to several million images. That’s why, in recent years, engineers in this field have been using CG images in order to create teaching data for the deep learning process.

Many people find it hard to believe that AI models created based on CG scenes can actually be used to detect objects in real-life photographic images, but many academic studies have already shown that AI model teaching using a mixture of CG and real-life photographic images does improve the recognition rate.

In other words, virtual environments can be used to create teaching data for AI deep learning?

Koguchi: Exactly! The Unreal Engine we use in that process includes a deferred rendering function, which can be used not only to render images, whereby images are computer-generated by calculations, but also images that are still being buffered. Correct-answer tags for teaching data can be added to images that are undergoing buffering, which dramatically boosts the efficiency of creating teaching data.

When working with virtual environments in this way, what types of situations are used in teaching data?

Koguchi: For deep-learning teaching data, it’s difficult to predict which data will facilitate correct visual recognition results during system operation. The AI must learn from a diverse array of data in order to produce a high-quality AI model.

In the case of virtual environments, we create uncommon scenes in which traffic signs and very similar commercial signs are placed near each other in order to test the AI’s ability to discern the traffic signs correctly. We refer to this type of scene as a “rare case,” and although such scenes are rare in real life, it’s very easy for us to create rare cases using a virtual environment.

Another big problem with ADAS recognition relates to objects on the road surface itself. Often this turns out to be an animal, and of course in real life we can’t get various types of animals to walk across a road in order to test the system. This is another area where virtual environments are very useful when creating AI learning data.

A parking assist system that can visualize difficult-to-see angles

As your third usage of the Unreal Engine, you mentioned the human–machine interface (HMI) function, and gave some R&D examples in the area of user interfaces for parking assist systems. Could you explain in more detail?

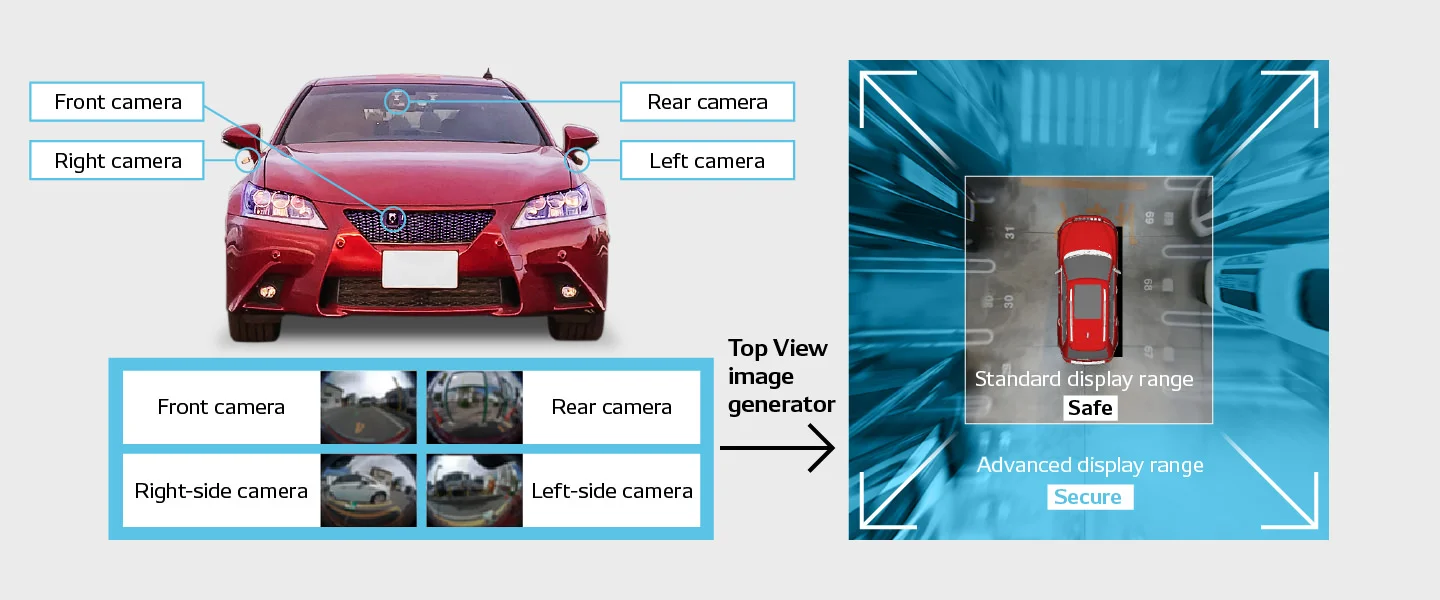

Koguchi: Certainly. Vehicles use cameras mounted on the front, rear left and right sides to capture images, which are then combined to create a simulated top-down view of the vehicle. If we could find a way to expand the range of this top-down view and eliminate some of the image warping that occurs, it would give people more confidence when using it to park their vehicles. This is an area where one of our joint R&D partners, Yosuke Hattori, is at the cutting edge.

When looking at images produced by a vehicle’s cameras, it’s sometimes hard to grasp the actual distances and positions, isn’t it?

Koguchi: That’s right, and if we simply change the angle of the onboard cameras to get a wider view, the image will remain warped, making it difficult for the user to get a good, intuitive understanding of the vehicle’s position. To solve this problem, we adopted various approaches using virtual environments in order to develop technologies for generating more useful images.

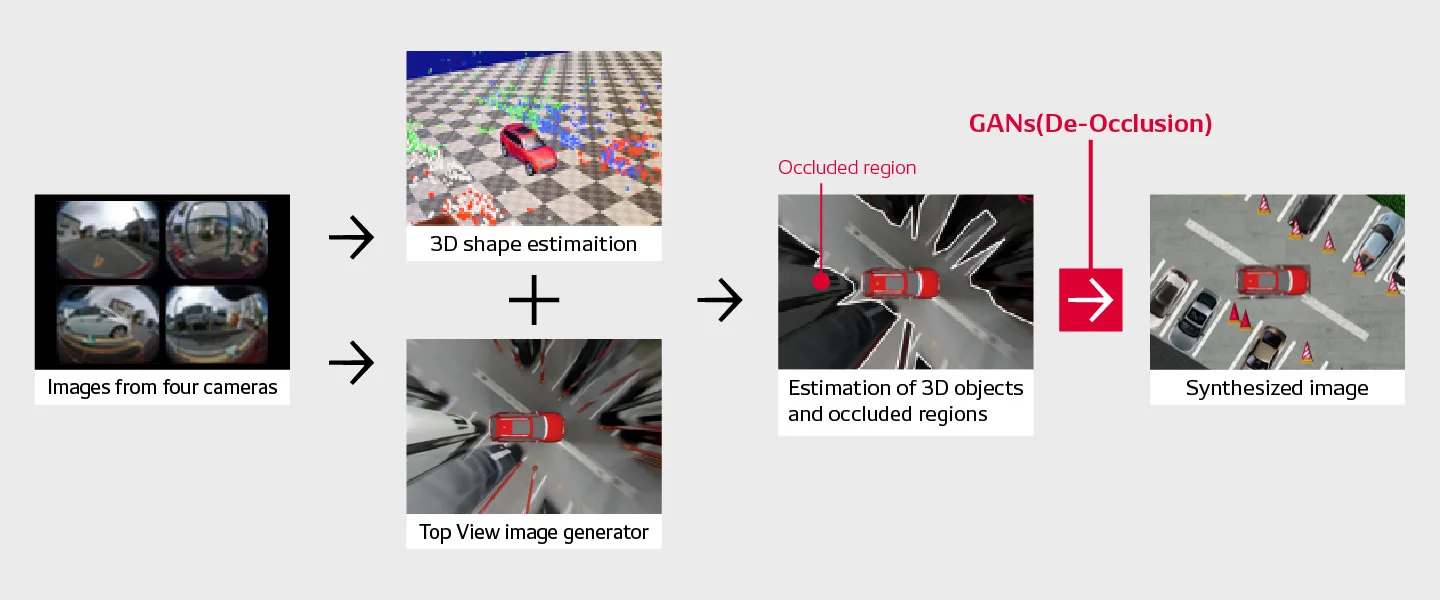

Based on 2D images from cameras on all sides of the vehicle, the system uses SfM, or “structure from motion,” technology to estimate 3D forms in the area surrounding the car. SfM utilizes shared points of reference found in multiple photographs of a scene in order to estimate the original depth distances. This enables the creation of a composite image for the surrounding area based on what is seen by the four vehicle cameras, but unfortunately the resulting image becomes unnaturally stretched out.

Using depth-distance information gained by using SfM technology, we can correct the top-down image; this gets rid of the stretching problem but leaves blank sections where the cameras cannot see. These are known as occluded regions. A two-stage algorithm is then used to detect the edges of these regions and restore them, and then fill in the gaps in a natural-looking way. This GAN-based image inpainting algorithm is known as EdgeConnect, and Yusuke Sekikawa of DENSO’s IT Laboratory selected it for us. Previously, we had tried using IntroVAE, which used variational autoencoders (VAEs) and does not perform edge restoration, but this didn’t work well for us because it caused distortion in the vehicle outline.

When you say “blank sections” and “gaps,” you’re referring to areas that the cameras cannot photograph, correct? How is it possible to fill in areas of an image that cannot be seen?

Koguchi: We use deep learning frameworks known as GANs, or generative adversarial networks, which can generate sections of photographs, illustrations and other images that never existed in the original data. The underlying principle behind GANs is competition between the “generator,” which plays the role of counterfeiter and creates fake images, and the “discriminator,” which serves as a sort of police officer.

The generator tries to produce image elements that resemble reality as closely as possible, while the discriminator judges whether images are real or fake. Through repeated competition-based learning in which the generator tries to make images so real that they can fool the discriminator, it’s possible to eventually produce realistic images.

How do you prepare the data to be used for AI model learning via GANs?

Koguchi: That’s where virtual environments are very helpful. In order to create the correct-answer teaching data based on real-life environmental dimensions, it’s necessary to use a camera-equipped drone or the like to photograph the actual view from above. With virtual environments, however, we can place objects anywhere and then view the scene freely from any angle. It’s also possible, of course, to install a camera on top of the car in order to gather top-down images. In short, virtual-environment-based data can be used to supply the correct answers used in teaching data.

Our project team created a virtual-environment model of the parking lot, and then generated around 100,000 top-down images. We then used these to teach an AI model, which was used to generate TopView images, and the results were good. We were able to ensure that the system correctly identified other vehicles in the images as vehicles. It normally takes a very long time to develop AI models of this sort, but we were able to finish the TopView image generator model in just two months.

Moving forward, we will continue to improve the quality of virtual-environment models, add images from real environments for AI learning purposes, and make other efforts to boost accuracy.

I imagine GAN-generated images are not identical to real life images?

Koguchi: Yes, that’s a problem. Images based on GAN estimations may not exactly match the real thing, so if we go ahead and use such images in a future ADAS application, we will make sure to clearly indicate which portions of the image are GAN-generated estimates—we would never just use a generated image as-is.

Our current project is a trial to explore the potential of virtual environments for us. If we want to guarantee correct image appearances in the future, one option would be to physically install cameras in the actual parking lots. By attaching cameras to the ceiling of a parking garage, for example, we could capture real top-down images. Taking advantage of this, one approach would be to place easily identifiable markings on the ground surface in predetermined patterns in order to make recognition of spatial characteristics easier.

If we were to pursue development in this manner, our first task would be to determine how many vehicle-mounted sensors are required to produce adequate results, what kind of work is required on the parking lot, and so forth.

Rethinking vehicle-related development based on virtual environments

Which aspects of using virtual environments for vehicle-related development do you find most fascinating?

Koguchi: I love that we can pursue things that would be difficult or impossible by testing physical vehicles due to safety and reliability concerns. I worked in the video game industry for 15 years, and when I began to feel that the games were becoming similar to reality itself, I decided to switch jobs. DENSO is a great place to work if you’re an engineer who wants to challenge yourself by pursuing various new technologies and techniques.

In recent years, I hear a lot of talk about virtual environments, particularly concepts like the metaverse and digital twins. Japanese video game technologies are on the cutting edge, and Japan is leading the world in virtual environment technologies through various trials. I look forward to seeing a lot of big breakthroughs in the future, and I will continue trying to come up with and implement new ideas for virtual environments. This is what I hope to achieve through my work in ADAS development.

I think vehicle-related development operations will experience some major changes in the coming years.

Koguchi: DENSO’s technological development approach has always been to take highly focused action after confirming things onsite and in person, based on the actual conditions. I consider this approach to be vital for ensuring user safety and peace of mind. To enhance the results produced by this method, I hope to make good use of virtual environments and develop better technologies in the future.

COMMENT

Changing your "Can'ts" into "Cans"

Where Knowledge and People Gather.